The Potential Problem No One Is Talking About

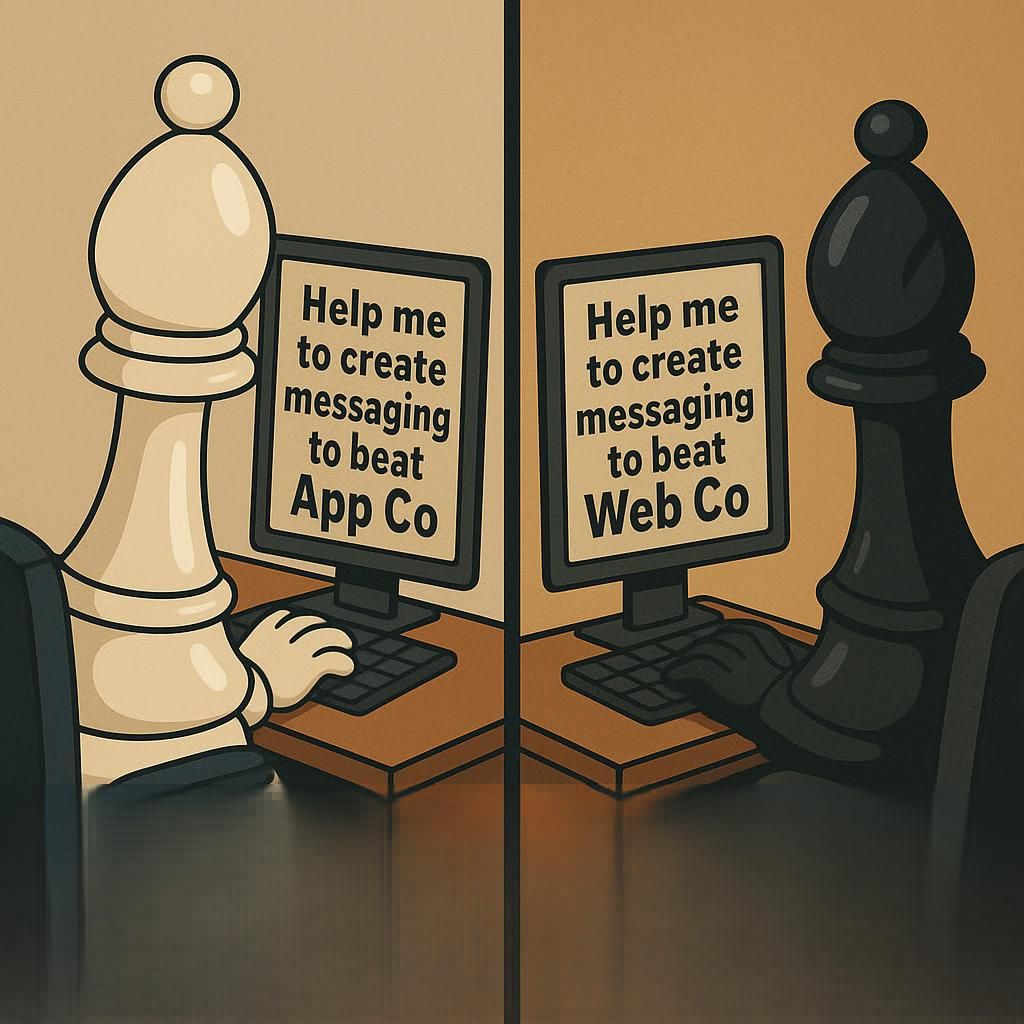

Imagine you’re leading marketing at a fast‑growing software company. You have a direct competitor whose product provides functionality similar to yours. Both companies are aggressive and ambitious, pursuing the same customer segments. Your CEO, hoping to accelerate your positioning and sharpen your message, decides that the company will rely heavily on an advanced AI platform for core product marketing and messaging. While this approach might seem efficient, I believe it’s fundamentally flawed—a perspective I’ve explored in greater detail here. Instead of building the strategy through research, brainstorming, and testing, the team integrates the AI deeply into the process, prompting, refining, iterating, and eventually shaping a cohesive framework that feels convincing enough to take to market.

You launch the new positioning and campaigns with confidence. But your competitor launches their own messaging shortly after – and outmaneuvers you. Their story resonates more clearly with your ideal customer profile. Their framing is tighter, their narrative more compelling, and their differentiation sharper. You lose deals. You lose momentum. Eventually, you lose market share.

Months later, a former employee from the competitor joins your team. Over a casual conversation, you learn something that changes the way you think about what happened: both companies had relied on the same AI platform to develop their messaging. Same model. Same general use case. Same strategic purpose.

Except your competitor got materially better outputs.

Your CEO is furious. The question becomes unavoidable: Did the AI system give better results to the other side? And if so, was it an unfair advantage? Even if prompting skill, internal strategy, and human judgment all played roles, the suspicion remains. And it leads to a more profound, future‑oriented question: If AI becomes a central contributor to strategy, will AI platforms eventually need to perform conflict checks?

At first glance, it sounds like a stretch. But consider how conflict checking works today in the legal world.

How Law Firm Conflict Checking Works

Law firms must follow strict procedures to identify and avoid conflicts of interest. Rules generally prohibit taking on clients with opposing or competitive interests. A law firm representing two adversarial companies in the same dispute is unthinkable. Representing two competitors in unrelated matters can still trigger disclosure or client-consent requirements. Even when there’s no obvious conflict, firms will run a conflict check. The process is not reactive; it’s formalized and preventative. These systems exist because legal counsel has privileged insight, access to confidential information, and the power to influence outcomes. To prevent misuse – or even the perception of misuse – of that information, conflict checking procedures are mandatory.

What This Has To Do With AI

Presently, AI systems aren’t representing anyone. They don’t sign ethical oaths. They don’t promise confidentiality in the traditional sense. But more and more companies, especially founder-led tech businesses, are delegating key strategic functions to AI, including messaging, product positioning, pricing guidance, competitive analysis, investor narratives, and even long‑term planning. In short, some companies are beginning to rely on AI in a role that looks uncomfortably similar to a professional services provider.

In the scenario above, your CEO might reasonably argue: Had he known the competitor was using the same AI engine for the same purpose, they may not have used it at all.

Why This Discussion Is Coming Soon

In start‑ups and PE‑backed companies, the stakes are inherently high. Significant capital is deployed early, expectations are aggressive, and the upside for the winners can be enormous. That dynamic creates real pressure on leadership teams, boards, and investors, and when things go sideways, it’s not unusual for companies or their backers to look for redress. These environments move fast, decisions carry outsized consequences, and disputes often sit at the intersection of governance and investor expectations. A founder or investor attributing some accountability for failure to an AI provider is more a question of ‘when’ than ‘if’.

Some large enterprises will deploy private or isolated instances of AI models, but that doesn’t eliminate the underlying issue. Even in those environments, the model’s architecture, training data, and reasoning patterns are shared across customers. A private instance may prevent data leakage, but it doesn’t prevent strategic convergence. If two competitors rely on the same underlying model to shape their positioning or product strategy, the conflict‑of‑interest question applies regardless of whether their deployments sit on shared infrastructure or behind a corporate firewall.

All AI companies require assent to click‑wrap agreements designed to limit their exposure, but those provisions will be tested when real money is on the line. People who’ve lost significant sums aren’t going to simply shrug and walk away; they’ll challenge the scope and applicability of those limitations, argue that their work falls outside what the agreement contemplated, or contend that the nature of their contributions made a blanket liability cap inappropriate. In high‑stakes environments, those fights are predictable, and companies should expect their contracts to be tested.

Whether plaintiffs will be successful in challenging the limitations of liability is anyone’s guess. Like most novel legal questions, there will be initial rulings in multiple jurisdictions, followed by second and third waves of cases claiming that they fall outside the contours of the initial rulings. Whether the plaintiffs score big wins early or must seek exceptions to initial setbacks, AI leaders will likely decide that money spent on preventing future legal actions almost always goes farther than money spent defending lawsuits.

How A Focus on Lawsuit Prevention Might Change Things

Unless there is unambiguous Federal legislation or other unexpected developments, AI companies will need to develop means of addressing their obligations to strategy clients differently from how they interact with transactional users.

Outcome 1: AI Providers Are Required to Disclose Conflicts of Interest

In this future, AI vendors would maintain some sort of conflict‑checking infrastructure. For example, if two companies in the same industry request strategic guidance or messaging development, the AI platform might be required to disclose: Another client in your competitive space is using this system for a similar purpose. This mirrors legal industry norms: disclosure without breaching confidentiality.

Outcome 2: Companies Treat AI Platforms Like Agencies—Requiring Exclusivity

Another possibility is that companies start negotiating exclusivity clauses with AI vendors. A large enterprise might pay a premium for an exclusive model instance for messaging, allowing them to ensure no competitor benefits from the same insights. This could create a new pricing tier in AI services, in the same way that consulting firms limit overlapping engagements in adjacent markets.

Outcome 3: Regulations Force AI Providers into Professional‑Ethics Frameworks

Just as financial advisors, attorneys, and auditors face regulated duties of confidentiality, loyalty, and impartiality, AI companies might eventually be required to adopt professional standards. This would apply specifically to use cases where the AI meaningfully shapes business strategy or competitive outcomes. AI as a more powerful form of internet search will not likely be impacted, but entity-level decision-making would be.

Outcome 4: Industry Self-Regulation and Standards

Industry self-regulation could emerge as a proactive approach to address these challenges. AI providers might collaborate to establish shared ethical standards, transparency guidelines, and conflict-checking protocols, ensuring fair practices without the need for external regulation. This approach would rely on collective accountability and could help build trust among users while avoiding the rigidity of imposed legislation.

Why This Discussion Isn’t Happening Yet

Most of us still frame AI as a tool, not as a strategic actor. But in many organizations today, AI is already generating materials that influence millions in revenue. As its role expands, the line between tool and advisor will blur. And with that shift will come new expectations of fairness, transparency, and professional responsibility.

Right now, nearly no one is asking whether AI systems owe duties similar to law firms, management consultants, or agencies. Yet as more companies rely on the same platforms to define their competitive identities, the question will become unavoidable.

Conflict checking may sound like an odd idea for AI today. In five years, it may be inevitable.